HFEL: Joint Edge Association and Resource Allocation for Cost-Efficient Hierarchical Federated Edge Learning

Published in: IEEE Transactions on Wireless Communications

这篇论文是在多层联邦的情况下,讨论节点调度和资源分配的问题

0x00 Abstract

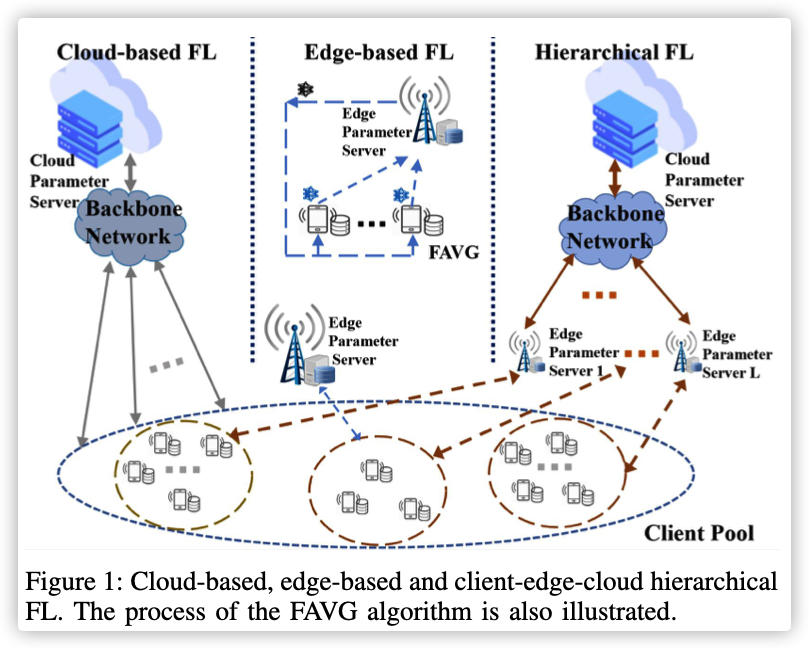

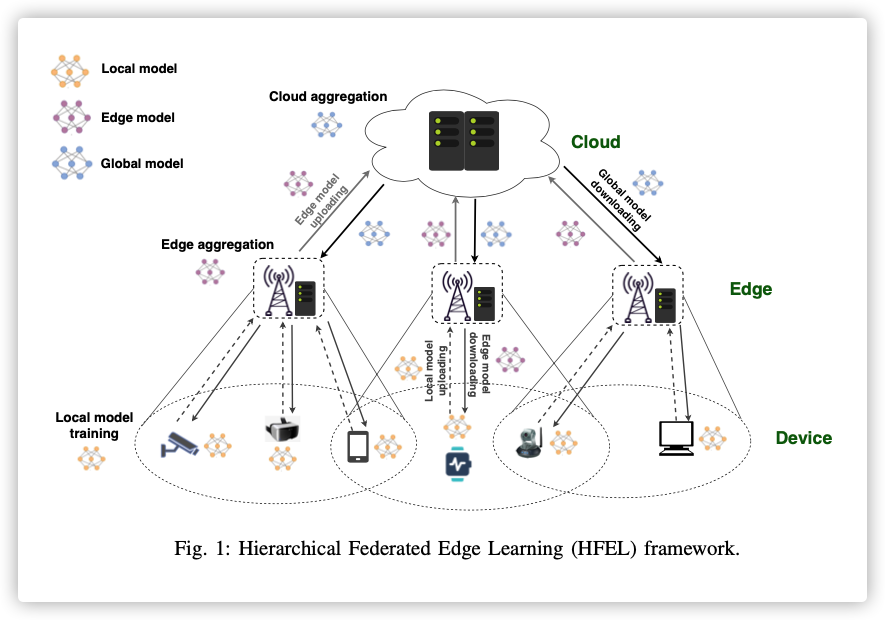

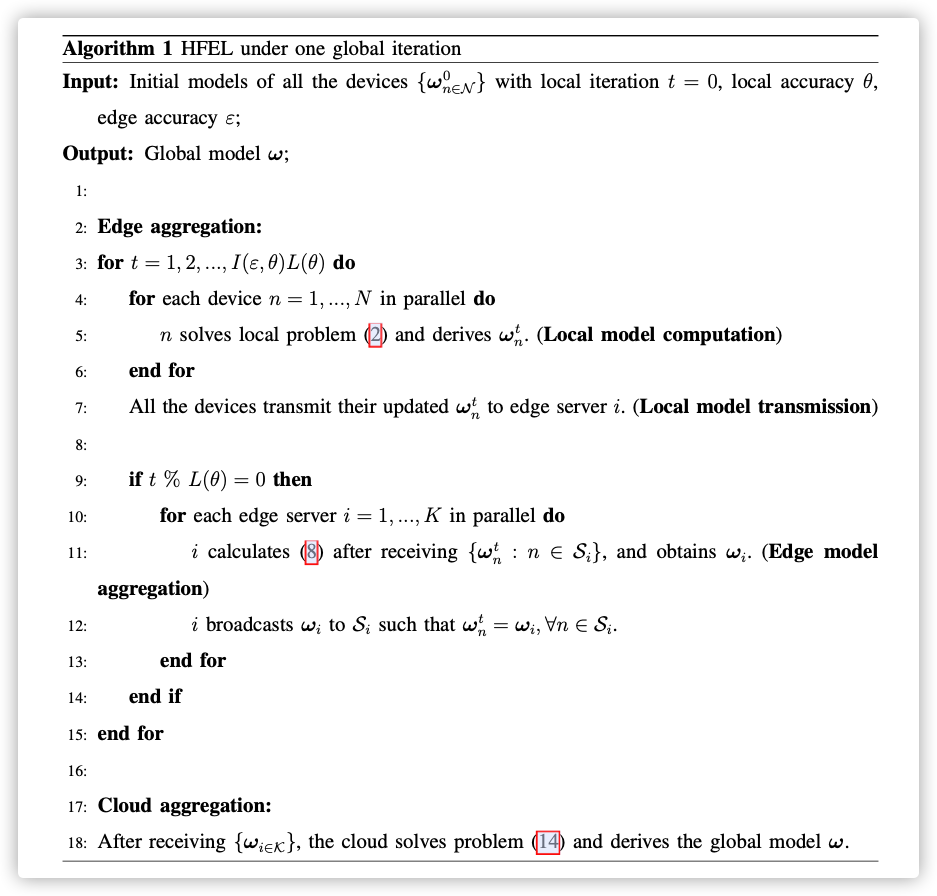

By leveraging edge servers as intermediaries to perform partial model aggregation in proximity and relieve core network transmission overhead, it enables great potentials in low-latency and energy-efficient FL. Hence we introduce a novel Hierarchical Federated Edge Learning (HFEL) framework in which model aggregation is partially migrated to edge servers from the cloud.

Key Words: Federated Learning, Resource Scheduling

0x01 INTRODUCTION

MEC allows delay-sensitive and computation-intensive tasks to be offloaded from distributed mobile devices to edge servers in proximity, which offers real-time response and high energy efficiency .

We propose a novel Hierarchical Federated Edge Learning (HFEL) framework, in which edge servers usually fixedly deployed with base stations as intermediaries between mobile devices and the cloud, can perform edge aggregations of local models which are transmitted from devices in proximity.

When each of them achieves a given learning accuracy, updated models at the edge are transmitted to the cloud for global aggregation.

We still face the following challenges:

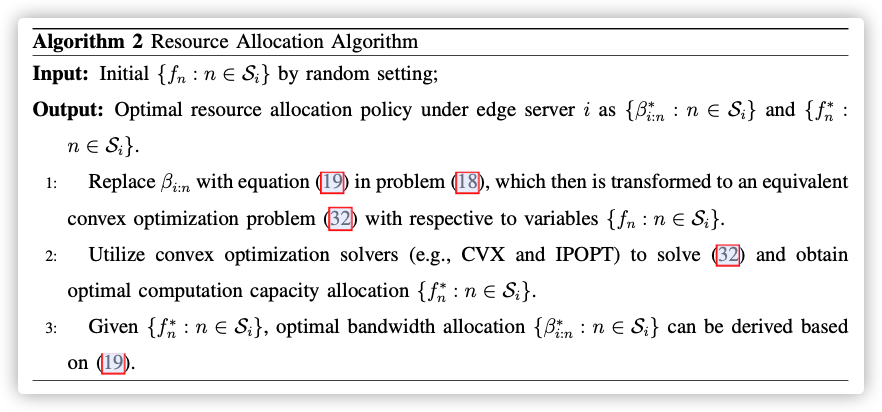

how to solve a joint computation and communication resource allocation for each device to achieve training acceleration and energy saving?

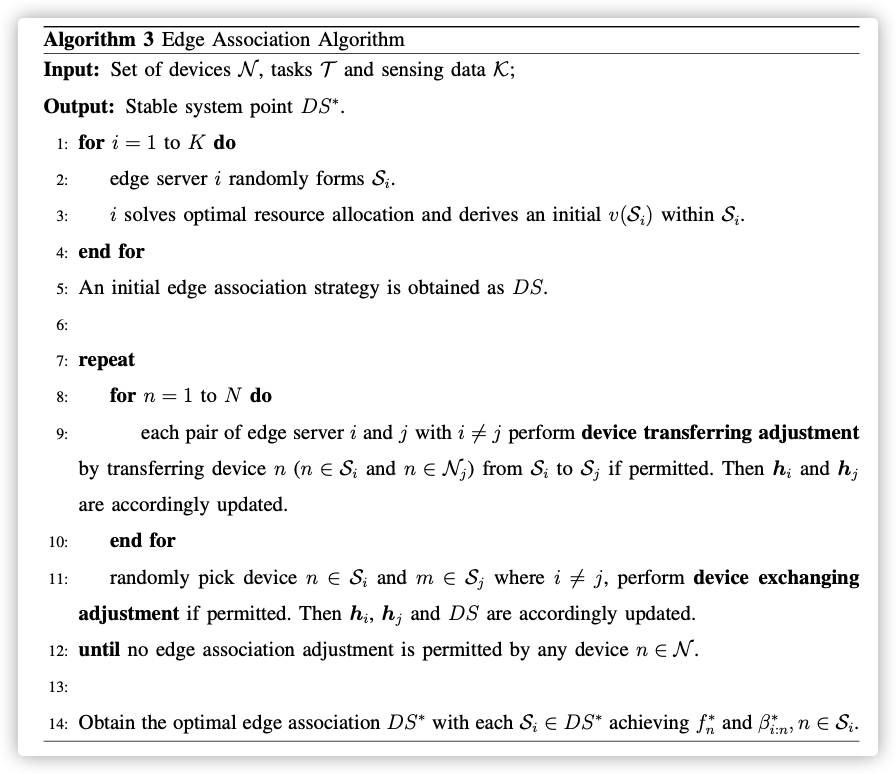

How to associate a proper set of device users to an edge server for efficient edge model aggregation?

整篇论文的思想:

- 分层聚合,边缘服务器作为过渡节点,只有边缘准确率到达指定标准,才会进行上层聚合

- 底层分治,对训练节点集进行划分,每个边缘服务器管理不同的节点子集

- 动态分组,初始化的划分可能不是最优的,这就需要对划分进行调整(将某个节点转到另一个边缘服务器管理),调整的依据是调整后整体的资源消耗比之前少。

- 资源分配,能源消耗取和,时间消耗取最大(性能最差的节点)

剩下的有时间再整理…又来新工作了。。。