Client-Edge-Cloud Hierarchical Federated Learning

0x00 Abstract

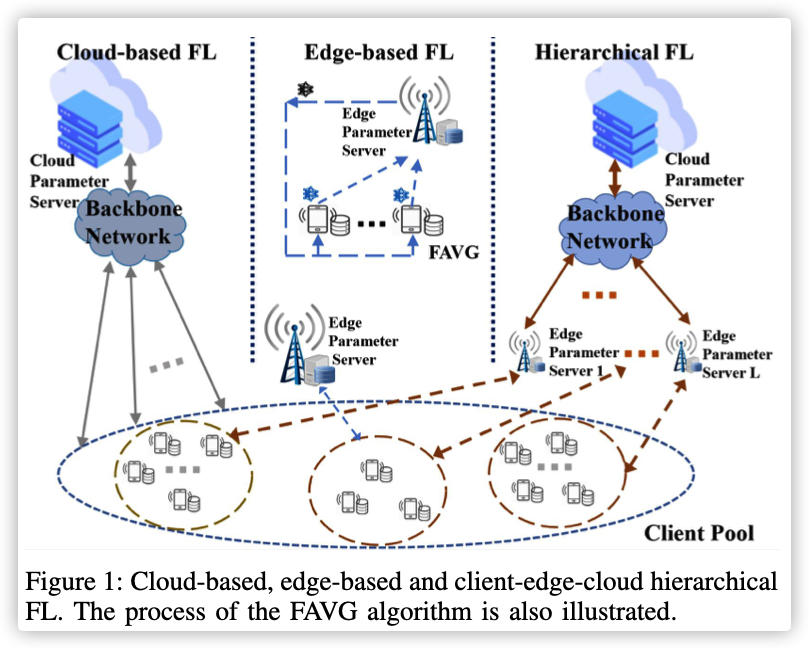

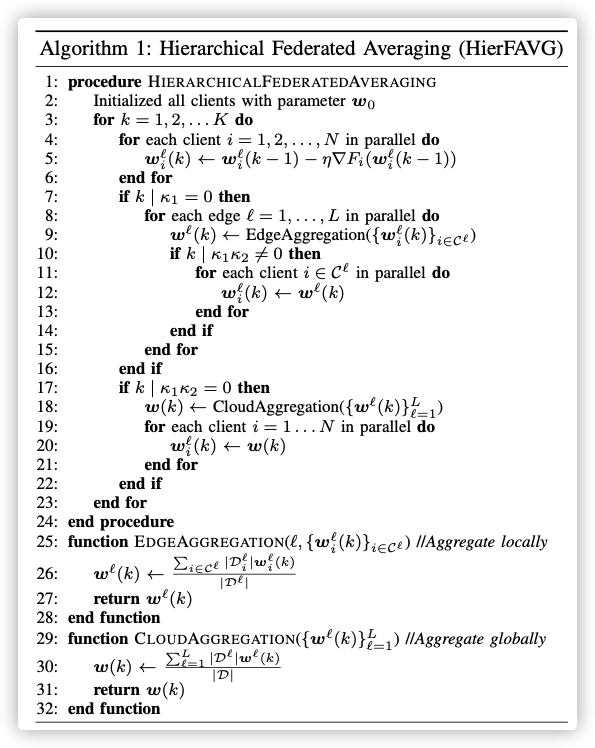

The cloud server can access more data but with excessive communication overhead and long latency, while the edge server enjoys more efficient communications with the clients. To combine their advantages, we propose a client-edge-cloud hierarchical Federated Learning system, supported with a HierFAVG algorithm that allows multiple edge servers to perform partial model aggregation.

Particularly, it is shown that by introducing the intermediate edge servers, the model training time and the energy consumption of the end devices can be simultaneously reduced compared to cloud-based Federated Learning.

Key Words: Mobile Edge Computing, Federated Learning, Edge Learning

0x01 INTRODUCTION

It is possible to pursue a better trade-off in computation and communication. Nevertheless, one disadvantage of edge-based FL is the limited number of clients each server can access, leading to inevitable training performance loss.

From the above comparison, we see a necessity in lever- aging a cloud server to access the massive training samples, while each edge server enjoys quick model updates with its local clients.

Compared with cloud-based FL, hierarchical FL will significantly reduce the costly communication with the cloud, supplemented by efficient client-edge updates, thereby, resulting a significant reduction in both the runtime and number of local iterations. On the other hand, as more data can be accessed by the cloud server, hierarchical FL will outperform edge-based FL in model training.

First, by extending the FAVG algorithm to the hierarchical setting, will the new algorithm still converge? Given the two levels of model aggregation (one at the edge, one at the cloud), how often should the models be aggregated at each level? Morever, by allowing frequent local updates, can a better latency-energy tradeoff be achieved?

0x02 FEDERATED LEARNING SYSTEMS

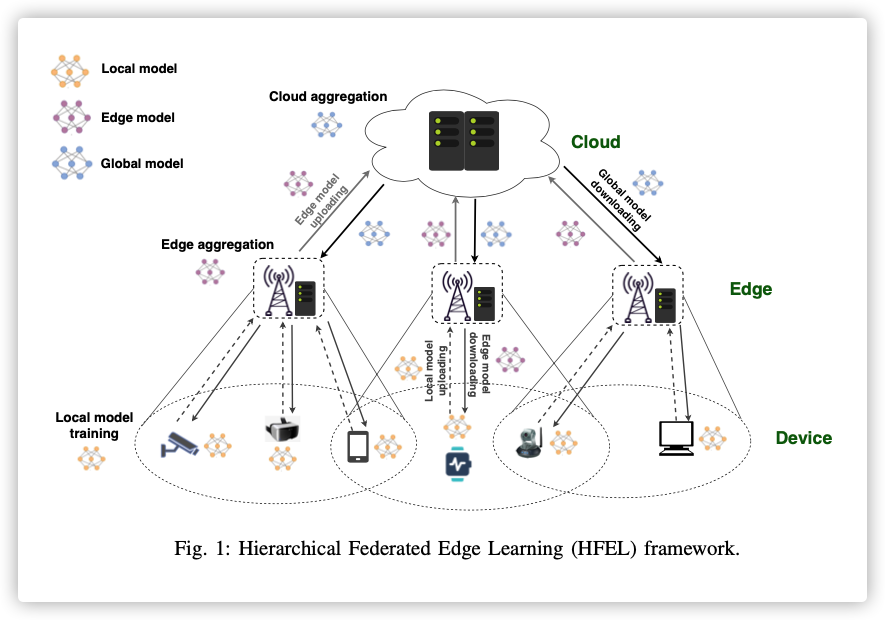

整体结构如最右侧:

整体思路就是分两层联邦聚合!

- 底层:边缘服务器和训练节点

- 高层:云端服务器和边缘服务器

聚合方式:

- 底层每次本地训练聚合一次

- 高层每次底层聚合才聚合一次

具体算法如下:

0x03 CONVERGENCE ANALYSIS OF HIERFAVG