Federated learning with hierarchical clustering of local updates to improve training on non-IID data

0x00 Abstract

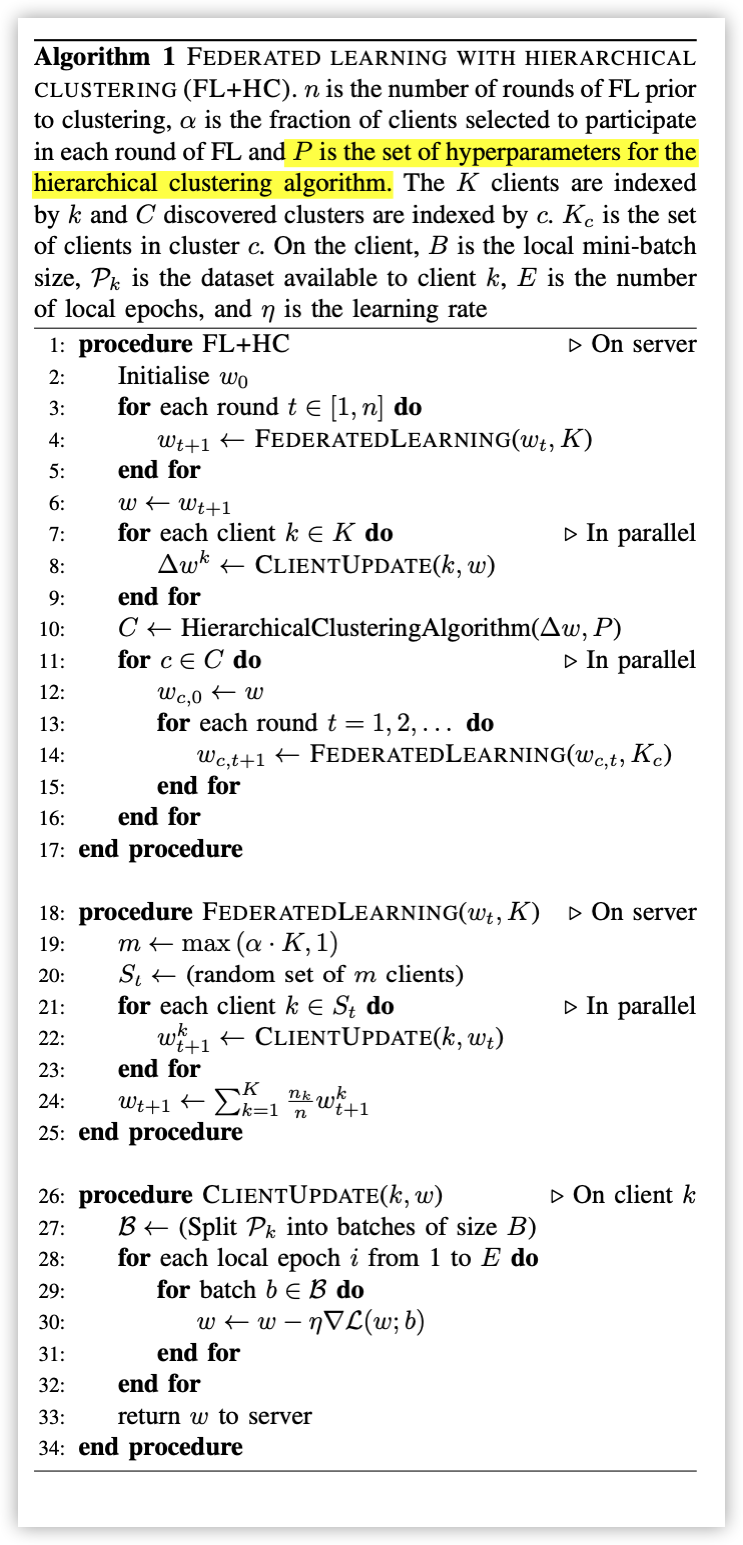

We show that learning a single joint model is often not optimal in the presence of certain types of non-iid data. In this work we present a modification to FL by introducing a hierarchical clustering step (FL+HC) to separate clusters of clients by the similarity of their local updates to the global joint model.

We show how FL+HC allows model training to converge in fewer communication rounds (significantly so under some non-iid settings) compared to FL without clustering.

Key Words: Federated Learning,Clustering,Non-IID

0x01 INTRODUCTION & BACKGROUND

In a typical scenario where data is massively distributed among clients, that data is likely to be unbalanced and non-iid. In this work we explore different non-iid data distributions and apply a hierarchical clustering algorithm to determine client similarity. In this way we can train multiple disjoint models that are targeted to clusters of similar clients with the intention to improve the performance of the training objective for all clients whilst reducing communication in the FL protocol.

Contributions:

- A method for training specialised models for subsets of clients that can increase test set accuracy whilst reducing the number of communication rounds required to reach convergence. This is achieved by clustering the clients by similarity based on their updates to the global joint model after a set number of communication rounds.

- A full characterisation of how hierarchical clustering affects test set accuracy when applied during FL training in iid and various non-iid settings.

- Recommendations for good default hyperparameters to use for training with our method when the non-iid nature of the data is unknown (as is likely to be the case when using FL).

Hierarchical clustering:

In this work we opt to use an agglomerative hierarchical clustering method which begins with all samples belonging to their own singleton cluster. Each sample is simply a vectorised local model update (the parameters of the local model).

At each step of the clustering, the pairwise distance between all clusters is calculated to judge their similarity. The two clusters that are most similar are merged.

This continues for a total of steps until a single cluster remains, containing all the samples.

Thus, a hierarchical structure of similarity between clients is constructed. This structure possesses the property of monotonicity, such that the dissimilarity of two clusters involved in merging increases with successive steps. A distance threshold can act as a hyperparameter to determine when to stop merging clusters. Intuitively, this acts to limit how dissimilar two clusters can be before stopping the merging process.

Two further hyperparameters

-

The first is the dis- tance metric uses to compute the similarity between clusters. In this work we opt to test L1 (Manhattan), L2 (Euclidean) and cosine distance metrics.

Euclidean distance is a common metric used to judge similarity between vectors, however the Manhattan distance works well for sparse vectors and is less affected by outliers. Finally, the cosine distance metric is invariant to scaling effects and therefore only indicates how closely two vectors point in the same direction.

-

The second important hyperparameter is the linkage mechanism for determining how similar two clusters are.

- [ ] Single linkage determines distance based on the most similar pair of samples across two clusters. Complete linkage determines distance based on the most dissimilar pairs of samples across two clusters.

- [x] Average linkage averages the samples within each cluster and compares distances based on these averages. Finally Ward’s linkage (which can only be combined with the Euclidean distance metric) seeks to minimise the intra-cluster variance upon merging two clusters.

主要思路:

通过模型反应数据的分布情况,模型相似度越高表示数据样本分布越相似,将模型相似的节点合并为同一个集群。

两个主要参数回答的问题:

- 怎样度量模型相似性?

- 度量值如何选择?极大?极小?还是均值?

0x02 FEDERATED LEARNING WITH HIERARCHICAL CLUSTERING

具体算法如下:

对于关键函数 HierarchicalClusteringAlgorithm原文中的解释如下:

For our experiments with applying hierarchical clustering to FL in order to provide better, personalised models targeted to clients with similar updates, we first train a global model for n communication rounds. This global model is then trained for a further 3 epochs on all clients to produce ∆w (the difference between the global model and local model parameters). The model parameters are reshaped to form a vector and used as feature inputs to the agglomerative hierarchical clustering al- gorithm. The clustering algorithm returns a number of clusters each containing subsets of clients that are most similar to one another.

FL then proceeds for each cluster independently for a total of 50 communication rounds.

Set Up:

-

MNIST -

Clients:100 -

IID- 每个节点上的数据分布是相同的,均有600个样本

-

Non-IID- 每个节点上的数据只有两种label,各300个样本

- 将数据养样本分成4组:每组会有两种label交换、下发到25个节点。(分成4组的原因就是聚类四组)

-

CNNTo perform machine learning on this dataset, a simple con- volutional neural network was designed taking single channel, 28 x 28 pixel images and passing these through two 5x5 convolutional layers (32 and 64 channels respectively) with Relu activations. Each convolutional layer is followed by a 2x2 max pooling layer. Finally the network passes data through a fully connected dense layer with 512 units and Relu activations and provides output via a softmax classification over the 10 possible digit labels (62 in the case of FEMNIST).

-

Baseline:FederatedAveraging -

Optimizer:Mini-Batch SGDA batch size of 10 over 3 epochs per communication round and set the learning rate to 0.1

0x03 RESULTS & DISCUSSION

有时间再看吧!

最近只想当个FW!